In 2012, the IEFT announced that a new version of HTTP was in development to replace its aging predecessor. The new protocol would be faster, simpler, and easier to implement than the original, but would rely on the same underlying principles of client-server communication. Google’s experimentation with SPDY had shown that it was possible to create a new, faster, yet still backwards-compatible protocol, and invited the task force professionals to build on their design. Three years later, on May 15, 2015, HTTP/2 was published in RFC 7450.

Adoption of HTTP2 was hindered right from the start when, just one month after the new protocol was published, Google announced that it would be phasing out SSL in favor of the more secure TLS protocol. Safari followed suit. These announcements alienated Linux-based operating systems since they did not support OpenSSL 1.0.2, which was required to run the newly-developed TLS extension ALPN; right off the bat, a sizeable portion of web servers were unable to use HTTP2. The backwards-compatible nature of HTTP2 further compounded its lack of adoption, and, if a site was experiencing speed problems, HTTP 1.1 was so old, studied, and configurable that workarounds like “spriting” images, “sharding” TCP connections, and “pipelining” client requests offered seemingly unlimited speed boost alternatives to switching protocols. In its first year and a half, HTTP2’s adoption rate barely reached 10%.

However, as we approach its two year anniversary, adoption of HTTP2 has only climbed to 13.6%.

As you can see from the graph, the rate of increase is also fairly constant. There must be some reason that HTTP2 is still stuck in the gate. In this article, I intend to make the case that the low rate of HTTP2 adoption is due to the simultaneous rise of mobile Internet use and HTTP2’s failure to translate its broadband strengths to that medium.

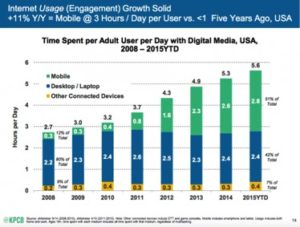

Within the last few years, marketers have had to confront the reality that people are using mobile phones to access the Internet at a much higher rate: in 2014, mobile internet use passed that of desktop use, and, according to a March 2017 post by Dave Chaffey of SmartInsights.com, “The latest data shows…. Mobile digital media time in the US is now significantly higher at 51% compared to desktop (42%).” The below graph from Adobe Analytics shows this surge is recent, and, when one considers the fact that mobile users only made up 30% of internet usage as recently as 2013, even the least mobile-oriented industries should be taking measures to keep up with the tide.

Within the last few years, marketers have had to confront the reality that people are using mobile phones to access the Internet at a much higher rate: in 2014, mobile internet use passed that of desktop use, and, according to a March 2017 post by Dave Chaffey of SmartInsights.com, “The latest data shows…. Mobile digital media time in the US is now significantly higher at 51% compared to desktop (42%).” The below graph from Adobe Analytics shows this surge is recent, and, when one considers the fact that mobile users only made up 30% of internet usage as recently as 2013, even the least mobile-oriented industries should be taking measures to keep up with the tide.

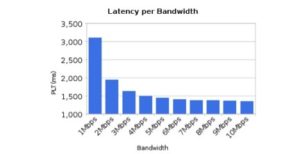

These developments are probably why it has been hard to ignore the marketing phrases of phone networks over the last couple of years that claim to have the latest 3g, 4g, and 4g LTE networks. While a lot of these claims are simply marketing big-talk, there has been a large reduction of mobile Internet load times created by these technologies: the average mobile speed in the United States increased 30% from 2015 to 2016, reaching nearly 8 Mbps. This is a crucial point, too. In his paper “More Bandwidth Doesn’t Matter (Much)” h2 developer Mike Belshe points out that, once bandwidth increases past 5 mbps or so, the amount of time it takes to load a page is hardly affected at all since latency becomes the rate-determining step.

One would think that a medium like mobile browsing would greatly benefit from Http/2, which derives much of its speed from addressing latency issues that plague mobile Internet use in particular. Recent developments mobile network have left the door wide open for this important adoption scenario to unfold, too.

In order to understand the point I’m trying to make, it is important to understand the nature of mobile network latency. When one tries to access the Internet through a mobile phone, the device must first contact a radio tower to request permission to connect to the Internet. The brain that controls such transfers for all radio communication is called the Radio Resource Controller (RRC), and it allocates who can talk when, the amount of bandwidth they receive, and more. The problem with mobile phones is that the radio technology on which they rely to make this request requires too much battery to maintain constant contact with the RRC (radios use a lot of battery). Instead, phones must make new connection requests to the RRC every time they want to browse the Internet. With past networks, this step alone could take up to several seconds, but nowadays it is usually between 10-100 ms thanks to provider investments in the new generations of 3g (CITE).

In order to understand the point I’m trying to make, it is important to understand the nature of mobile network latency. When one tries to access the Internet through a mobile phone, the device must first contact a radio tower to request permission to connect to the Internet. The brain that controls such transfers for all radio communication is called the Radio Resource Controller (RRC), and it allocates who can talk when, the amount of bandwidth they receive, and more. The problem with mobile phones is that the radio technology on which they rely to make this request requires too much battery to maintain constant contact with the RRC (radios use a lot of battery). Instead, phones must make new connection requests to the RRC every time they want to browse the Internet. With past networks, this step alone could take up to several seconds, but nowadays it is usually between 10-100 ms thanks to provider investments in the new generations of 3g (CITE).

Unfortunately, as Ilya Grigorik points out in his book High Performance Browser Networking, the recent improvements in mobile technology have only improved the first half of the mobile Internet connection process. After a phone has connected to the RRC its signal then has to be transferred from the RRC to the Internet, first through the Package Gateway, then through the Serving Gateway. THIS INCURS THE FIRST PACKET TAKING A LONG TIME LIKE YOU SAW IN THEIR STUDY – THEY SHOULD HAVE SEEN IT COMING (132). The adoption of new mobile networks has mostly reduced the latency incurred when a user connects to the tower and then successfully negotiates connection with the tower; everything after that is still subject to wireless carriers’ Core Network and Internet Routing latency. In short, latency is still a problem for even the most up-to-date networks (Grigorik 128-132).

The issue with such developments is, as Mr. Grigorik points out, that the cost and time requirements of the rollout of such networks. He claims that, though there is already a lot of hype concerning the next generation of networking, the companies involved will not be able to keep up with the expenses required to implement new technology as quickly as it comes out, with the effect of having old networks be around for “at least another decade.” Mobile site developers, he commands, should plan for this (Grigorik 105).

One of the primary goals of HTTP/2 is to reduce latency through a variety of means, and early tests of SPDY showed that it was 23% faster for mobile browsing (https://developers.google.com/speed/articles/spdy-for-mobile). For our discussion, this sort of latency-derived speed boost in a time of network inactivity would mean that aggressive companies would be able to develop HTTP/2 for their devices and websites and reap the benefits of h2’s speed. For sites with thousands of sessions of day, on a medium where each additional second of page load time reduces conversions by 7%, there is an astronomical amount of money that h2 could be saving companies in 3G’s wake (https://blog.kissmetrics.com/loading-time/).

One of the primary goals of HTTP/2 is to reduce latency through a variety of means, and early tests of SPDY showed that it was 23% faster for mobile browsing (https://developers.google.com/speed/articles/spdy-for-mobile). For our discussion, this sort of latency-derived speed boost in a time of network inactivity would mean that aggressive companies would be able to develop HTTP/2 for their devices and websites and reap the benefits of h2’s speed. For sites with thousands of sessions of day, on a medium where each additional second of page load time reduces conversions by 7%, there is an astronomical amount of money that h2 could be saving companies in 3G’s wake (https://blog.kissmetrics.com/loading-time/).

The initial mobile speed results proved misleading, however, and multiple investigations of h2 have shown that it may not be the panacea we were hoping for. To understand why h2 is supposed to be a latency-killer (and why I believe its adoption depends on mobile performance) one has to understand the nature of HTTP Pipelining and HOL blocking.

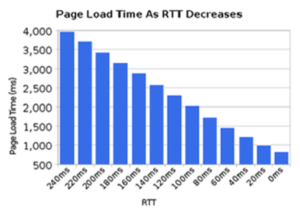

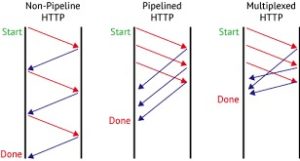

One of the primary elements in h2’s design is its reduction of latency by using multiplexing. For those who don’t know, HTTP is a “stateless” protocol, meaning that a server has no memory of its communication with any client. Each client request must be processed in its entirety. Before HTTP 1.1, the problem with stateless connections was that the underlying TCP connection would only last for single requests: every time a request was made, a new TCP connection was required; therefore, before HTTP 1.1, every object or request required to load a page or send a message incurred the round-trip latency penalty required to send packets from client to server and back again. The amount of time that networks spent simply waiting was too high. HTTP 1.1 addressed this issue by simply telling the servers to keep the connection open once they dispatch their last packet. This is called a “persistent” connection: clients don’t have to establish all-new TCP connections after every request they sent. Persistent connections allow multiple messages to be queued up for processing: after every message is sent, the sender waits for a brief window of time and then sends another. As long as all the messages are completed in order in which they were sent, no information is lost. This is called “HTTP pipelining,” and it speeds up the process dramatically since there is no need to establish a TCP connection for every new request. HTTP Pipelining still suffers from the fact that each request has to be processed in the order that it was sent, however, and whenever a packet of information is lost over the wire it has to be resent and responded to completely before addressing the next one. This is called “Head-of-line blocking” (HOL blocking) – since the line has to proceed in order, the entire process can be halted by the leader. HOL blocking is the primary reason why latency is often responsible for slowing Internet speeds. Modern browsing applications have sought to mitigate HOL blocking by opening up more TCP connections, allowing more lines to process more leaders at the same time. This is also an imperfect solution as additional TCP connections encumber the network’s processing power such that, past a certain point, the latency from the extra TCP connections will slow the process.

HTTP/2 aimed to solve HOL blocking by “multiplexing,” a process that opens multiple simultaneous streams in the same TCP connection by allocating “frames” to each stream. Frames are simply time periods that contain packets that pertain to a specific request stream – they carry pieces of individual stream, with each request being identified by its ID. Streams are then processed piecemeal, their requests completed bit by bit every time their frame occupies time on the TCP connection. “Interleaving” frames allows multiple requests to be carried out at the same time over one persistent TCP connection, and eliminates the need to establish more connections to do the same amount of work. The key benefit to multiplexing is that, if a packet is lost, the HTTP stream will continue to process frames and store them in the receiver’s buffer zone (an ordered queue of requests waiting for TCP transmission) until the lost packet’s frame appears again. The HTTP stream will then process the lost packet when its frame cycles back for TCP transmission to the receiver. Since TCP requires packets to be processed in order, however, no information can be transmitted until the missing packets are retrieved; any application that relies on TCP to make sense of the HTTP stream will be help up in the same way that TCP was held up by HTTP inefficiency prior to pipelining. This is also Head-of-line blocking, but it is the result TCP’s in-order requirements rather than HTTP’s inability to process requests out of order. Though the latency incurred by packet loss is still present, multiplexing essentially moves the problem of packet loss to something that must be addressed at the TCP level. The developers of HTTP/2 realized this, but because developing an alternative to TCP would mean that the entire Internet would have to change how it communicated, reworking TCP was not something that was addressed in HTTP/2.

HTTP/2 aimed to solve HOL blocking by “multiplexing,” a process that opens multiple simultaneous streams in the same TCP connection by allocating “frames” to each stream. Frames are simply time periods that contain packets that pertain to a specific request stream – they carry pieces of individual stream, with each request being identified by its ID. Streams are then processed piecemeal, their requests completed bit by bit every time their frame occupies time on the TCP connection. “Interleaving” frames allows multiple requests to be carried out at the same time over one persistent TCP connection, and eliminates the need to establish more connections to do the same amount of work. The key benefit to multiplexing is that, if a packet is lost, the HTTP stream will continue to process frames and store them in the receiver’s buffer zone (an ordered queue of requests waiting for TCP transmission) until the lost packet’s frame appears again. The HTTP stream will then process the lost packet when its frame cycles back for TCP transmission to the receiver. Since TCP requires packets to be processed in order, however, no information can be transmitted until the missing packets are retrieved; any application that relies on TCP to make sense of the HTTP stream will be help up in the same way that TCP was held up by HTTP inefficiency prior to pipelining. This is also Head-of-line blocking, but it is the result TCP’s in-order requirements rather than HTTP’s inability to process requests out of order. Though the latency incurred by packet loss is still present, multiplexing essentially moves the problem of packet loss to something that must be addressed at the TCP level. The developers of HTTP/2 realized this, but because developing an alternative to TCP would mean that the entire Internet would have to change how it communicated, reworking TCP was not something that was addressed in HTTP/2.

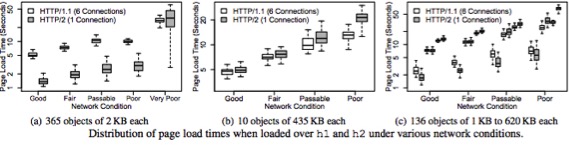

The results of testing HTTP/2 on mobile networks have been discouraging to say the least. A recent joint study between Montana State Ph D student Utkarsh Goel and Akamai Technologies’ Moritz Steiner, Mike Wittie, Martin Flack and Stephen Ludin tested page load times for both HTTP 1.1 (pipelined over six TCP connections) and HTTP 2 over mobile networks. They monitored page load times for each protocol under a variety of conditions on a real mobile network to see how HTTP/2 would work under real browsing conditions. Their findings show that the performance of each version of the protocol depends on the prevailing network conditions, the number and size of objects requested, and the size of the web pages being accessed.

The results of testing HTTP/2 on mobile networks have been discouraging to say the least. A recent joint study between Montana State Ph D student Utkarsh Goel and Akamai Technologies’ Moritz Steiner, Mike Wittie, Martin Flack and Stephen Ludin tested page load times for both HTTP 1.1 (pipelined over six TCP connections) and HTTP 2 over mobile networks. They monitored page load times for each protocol under a variety of conditions on a real mobile network to see how HTTP/2 would work under real browsing conditions. Their findings show that the performance of each version of the protocol depends on the prevailing network conditions, the number and size of objects requested, and the size of the web pages being accessed.

The first test in the study compared the performance of each protocol when requesting 365 objects averaging 2kb apiece. What they found was that HTTP/2’s load times were about half of those for HTTP 1.1 for all network conditions better than “very poor,” but that page load times then skyrocketed for http/2 to being far higher than those experienced by http1.1 under similar conditions. When the same test was conducted for a relatively small number of large objects (10 objects averaging 435 KB), h1 actually outperformed h2 for all network conditions, and the losses experienced under poor to very poor conditions were significantly higher for H2. Lastly, and most realistically, the team tested the acquisition of a moderate number of objects of all sizes for 2mb, 8mb, and 12mb size web pages. The team found that Http/2 only outperformed h1 at the 2mb page size range, barely kept pace with h1 under normal and fair conditions for 8mb page sizes, and was soundly beaten under all network conditions for the 12mb page (Utkarsh, Steiner et al.).

). If it can only work better half the time, there is no point, and when you consider the fact that mobile internet use is 1) growing rapidly 2) prone to packet loss and 3) often conducted under less than ideal conditions, it really makes no sense to adopt h2 for mobile use (Utkarsh, Steiner et al.).

The study has highlighted a clear weakness of h2: packet loss due to network congestion. The reason for this is that when multiple TCP connections are used in h1, the loss of a packet on one connection only causes the HOL blocking of that one connection. Since h2 multiplexing allows the use of only one connection, the loss of any one packet will hold up every object on that connection. What is ironic is that this is the same sort of problem that the mobile networks faced when they upgraded their networks: when one eliminates the problems caused by one step of the process, the next step of the process becomes the rate-determining step; the mobile networks still face Core Network latency in the same way that HTTP still faces the in-order nature of TCP.

There are many reasons why mobile Internet use should be the driving force behind the adoption of Http/2: the escalating prominence of mobile users, the latency-susceptible nature of the mobile medium, the stagnation of mobile network development, and the backwards-compatibility of the h2 protocol all suggest that if HTTP/2 had behaved on mobile networks the way it was expected to, then there would be no reason for widespread h2 adoption on mobile sites. h2 was stopped in its tracks by our unwillingness (dare I say inability) to rework TCP. What does this mean for the future? Are we stuck with in-order TCP forever? The only alternative at the moment is the unreliable UDP, which is the lesser-used protocol of the two for good reasons.

There are many reasons why mobile Internet use should be the driving force behind the adoption of Http/2: the escalating prominence of mobile users, the latency-susceptible nature of the mobile medium, the stagnation of mobile network development, and the backwards-compatibility of the h2 protocol all suggest that if HTTP/2 had behaved on mobile networks the way it was expected to, then there would be no reason for widespread h2 adoption on mobile sites. h2 was stopped in its tracks by our unwillingness (dare I say inability) to rework TCP. What does this mean for the future? Are we stuck with in-order TCP forever? The only alternative at the moment is the unreliable UDP, which is the lesser-used protocol of the two for good reasons.

Utkarsh and his team believe that the way forward is to use multiple h2 TCP connections in the same way that browsers treat connections for H1. This brings up another question, though: will h2 tolerate additional connections? Will multiplexing experience more of less latency when operating under multiple connections? Should h2 have addressed TCPs HOL blocking? Perhaps backwards-compatibility and TCP should be scrapped for a more long-term solution. One thing is for sure – the next few years are going to be very important for the future of mobile Internet use. If networks don’t match the rate of mobile Internet adoption with infrastructure investment, mobile Internet use may take a long time before it sees any improvement again. As for h2, it may have already missed its chance.

Additional Works Cited:

- HTTP/2 Performance in Cellular Networks – Utkarsh Goel†, Moritz Steiner, Mike P. Wittie†, Martin Flack, and Stephen Ludin

- More Bandwidth Doesn’t Matter (much) – Mike Belshe

- ADI: Consumers Spending Less Time On Websites Across All Industries – Giselle Abramovich